The Loup Ventures NCAA bracket contest isn’t as hotly contested as we thought it would be. We entered Bing’s AI bracket into our pool, and it’s just as busted as the others. In fact, Bing’s bracket will finish at the bottom of our pool, in 7th place, regardless of the outcome of tonight’s game. We would like to think that we outsmarted AI, but the reality is that predicting the outcome of the NCAA tournament is more a matter of luck than skill. Bing’s performance doesn’t mean it’s broken, just unlucky this year.

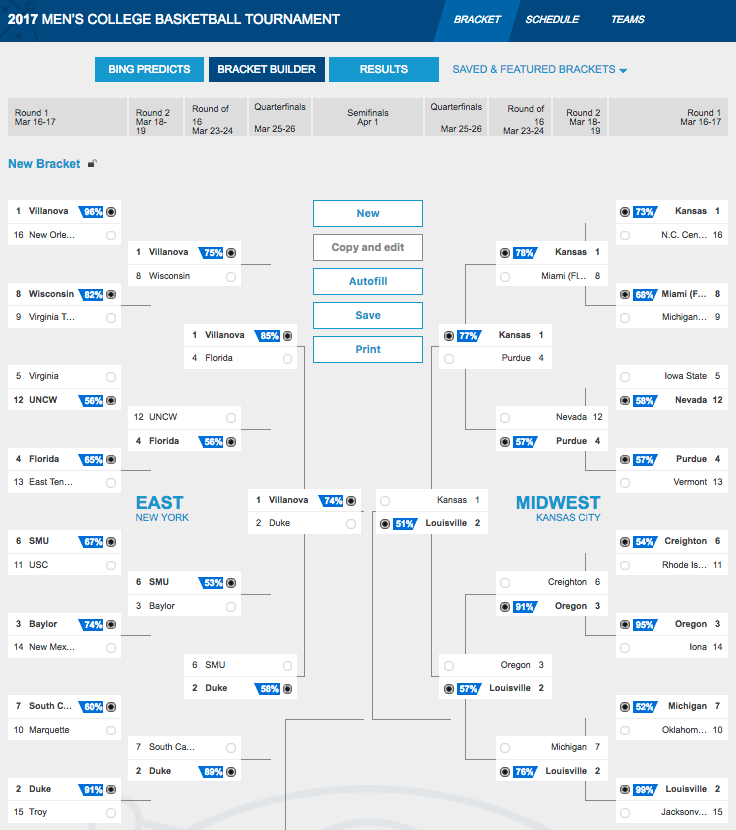

* Bing Predicts 2017 NCAA Basketball Bracket

To date, Bing has chosen 39 out of 67 games correctly, including the opening round. Bing was 2 of 4 in the opening round, 24 of 32 in the 1st Round, 9 of 16 in the 2nd Round, 4 of 8 in the Sweet Sixteen, before going 0 for 4 in the Elite Eight and ending its chances at victory. If you look at Bing’s bracket now, it will show a different story, because it re-picked winners for matches after each round. Even with this adjustment, it only picked 47 of 66 games correctly, leading into tonight’s game. In the adjusted rounds, Bing chose Final Four weekend right with Gonzaga and UNC as winners, with UNC ultimately taking home the crown.

How does Bing predict winners? First, it’s important to understand how Bing predicted its winners. The Bing Predicts algorithm factors in millions of data points in an effort to create the best predictive model. The algorithm looked at every college basketball game played in the last 15 years in an attempt to analyze correlations between measurable statistics and wins. The algorithm will give an output of the likelihood in which a team will win the game. It’s not meant to choose a certain winner, but the higher the percentage, the greater the disparity amongst the teams.

Walter Sun, an architect of the Bing Predicts algorithm, was asked by Wired Magazine about some of the important considerations in the algorithm. Defensive efficiency, strength of schedule, coaching rankings, and miles traveled were a few of the metrics that the algorithm measures.

For defensive efficiency, strong defensive teams have had more success in the tournament historically. For strength of schedule, the theory is that the seeding committee favors Power 5 conference teams, which leads to schools in smaller conferences being underrated. For coaching ranking, experienced coaches have a big impact on the success of their teams in the postseason. For miles traveled, teams that travel farther have a harder time winning, especially when they travel across time zones.

While it’s helpful to understand some of the metrics driving the Bing Predicts algorithm, taking a closer look at AI explains why the cards are stacked against Bing.

Why didn’t Bing give better advice? The probability that Bing assigns to any given team winning is very likely the most accurate prediction possible, but a probability is still a probability. If Bing says a team has an 80% chance to win, that means it has a 20% chance to lose. But picking winners in a bracket is binary. You are choosing a team to win, period. If the 20% chance happens, brackets are bound to be busted happens – and with 69 games to predict it’s almost a statistical guarantee.

Bing could be better at NCAA predictions if it was able to collect data about future events leading up to the game. What will the hydration level of each individual player be at game time, how much rest did each player get the night before the game, what is going on with each player psychologically, etc. All of these incremental factors would influence what historical data predicts if it were possible to be known by Bing. Even then, every possession of the game can be influenced by random events and the flow of the game changes the probability of how teams play later in the game. The Patriots’ epic comeback in the Super Bowl is a perfect example. They had a 99%+ chance to lose, which means they had a less than 1% chance to win, but not a 0% chance to win.

What does this mean for the future of AI? We shouldn’t overreact. Every action we take as humans could be viewed as a predicted potential outcome and sometimes predictions are wrong. Take driving as an example. Every action we perform while driving is based on a predictive calculation in our heads about where we want the car to go and how to get it there. 1.3 million people die every year in traffic accidents, so we aren’t very good at those predictions. Machines already drive better than humans, because they don’t get distracted by phones or emotional about bad drivers.

While skeptics may laugh that the robots failed at predicting the outcome of a basketball tournament, AI is still a better predictor of outcomes than any human because of the amount of data it can incorporate in its prediction. We shouldn’t expect AI to be right 100% of the time because it won’t be, but it will be right more often than humans making the same predictions. Humans got lucky with our brackets this year, but shouldn’t expect an easy repeat next year.

Disclaimer: We actively write about the themes in which we invest: artificial intelligence, robotics, virtual reality, and augmented reality. From time to time, we will write about companies that are in our portfolio. Content on this site including opinions on specific themes in technology, market estimates, and estimates and commentary regarding publicly traded or private companies is not intended for use in making investment decisions. We hold no obligation to update any of our projections. We express no warranties about any estimates or opinions we make.